Worth reminding you perhaps that gather(AA) does not move AA to the CPU, it creates a temporary variable on the CPU and copies AA there. Hopefully we will soon have our own monitor apps so you can more easily see this behaviour in MATLAB. That means the GPU isn't always being used when a line of code is run, it doesn't always finish when the next line of code is run, and memory no longer assigned to a variable is not necessarily released back to the system to show up as free in the Task Manager. Whatever you're seeing in the task manager is heavily confused by the fact that MATLAB's GPU functionality pools memory and operates lazily and asynchronously. So use gpuArray(gather(AA)\gather(rhs)) rather than just gather(AA)\gather(rhs), to ensure U1 is still a gpuArray on output.Īs a developer of the gpuArray datatype, I'm usually - not always, but usually! - pretty confident in my assertions about its behaviour. Just before I started wrting this I thought that maybe I should look up and see what gather actually does.ĭo make sure you move the result of backslash back to the GPU so that subsequent operations take place on the GPU. Your suggestion of rhs clearly works no matter how big xRange because I can get results for it even when the program falls over which just leaves me the question of why U1 = gather(AA)\gather(rhs) works and works quickly whereas U1 = AA\rhs does not anbd gives NaN. However, that does not make sense when you get to the for loop. So everything is now on the graphics card after a lot of one off calculations on setup. All the others are well zeroed and therefore sparse or else they are simply vectors. However, this all sounds fine until I realised that U, which is the output, sits quite happily in the gpu card memory. However, trying to create A or AD or AS on the graphics card runs out of memory much faster (54+). This is where, if xRange (300+) is very large that the program runs out of memory. My limited understanding of implementation suggests that the problem is AS. The time when I used the CPU only before I had the NVIDIA (xRange = 320) was 23+ hours for a single run whereas this morning 360 xRangetook 5 minutes. When I tried for 400 because the card appeared to be less than "full" it fell over because my CPU/RAm at 128GB ran out of memory!, not the card!. I have therefore used the main memory to create the large matrices and to sparse them and then loaded them to the card. I have been watching carefully the Task manager as the computer handles the code. The "out of memory" seemed to happen because the graphics card maintained both versions. It transpires that the whilst the graphics card works sparse OK it appears to me that it manages memory poorly. I tried to be clever and went for 240 and it fell over but I have spent a bit of time thinking about sparse. Firstly everything ran fine up to 200 xRange. There are more than three files.This morning has been very productive.

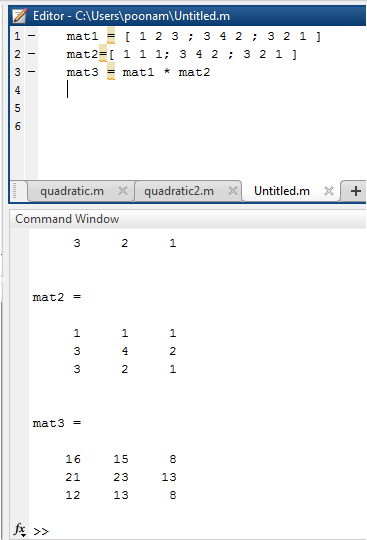

| Options | C (loop) | Fortran (intrinsic) | Fortran (loop)| I’m curious if anybody has thoughts on the analysis I did - are there other options I should try, other circumstances in which the matmuls are occurring, something I overlooked? Thanks! _summary.md Here I compared the effect of different compiler optimizations in both Fortran and C for a program that multiplies a matrix with a vector.

I compared with both Fortran and C, and got essentially the same top speed but Fortran’s matmul intrinsic was much faster with no optimization turned on (and interestingly gets slowed way down by -O3). Hi, I’m looking at the impact of different compiler options on the speed of vector matrix multiplication.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed